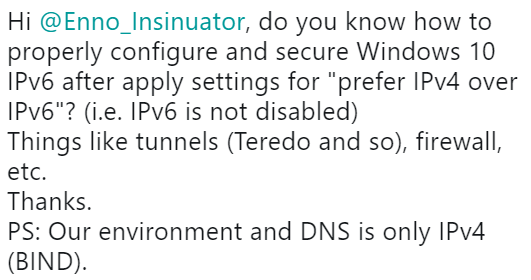

Starting a post, in 2019, with a mention of sth being “IPv4-only” somewhat hurts ;-), but here we go. Recently Manel Rodero from Barcelona asked me the following question on Twitter:

In this post I’ll try to discuss some inherent aspects of that question and ofc I’ll try to provide a response to it, too ;-).

Let’s first think about the main IPv6-related risks (= threats put into a context of relevance) in an “environment [that] is only IPv4”. While some of you might scratch your heads “what IPv6 threats could there be in an IPv4 setting?” I’m tempted to scratch my head: “what could be the reasons to run an university network without IPv6 these days, or to use BIND?” (which I have a strong opinion on, see here or here). But I disgress. More seriously the main reason for the question can be broken down to:

- since a few years operating Windows OSs in a way that is considered “compliant with Microsoft Support requirements” means that they have to have IPv6 “enabled”, by some definition of “enabled” (more on this in a second).

- this in turns means that even in a seemingly IPv4-only environment systems running Windows Server 2016/Windows 10 (and other versions since Vista) will have *some* IPv6.

The Microsoft document “Guidance for configuring IPv6 in Windows for advanced users” (which, to my knowledge, is the most recent advise of its kind) states:

“We recommend that you use ‘Prefer IPv4 over IPv6’ in prefix policies instead of disabling IPV6.”

which is exactly the approach Manel described in his tweet. From a technical perspective this leads to a system IPv6 state where

- IPv6 is fully enabled, but evidently in the absence of IPv6-enabled routers emitting RAs only link-local addresses (LLAs) are configured.

- by the above approach (setting the so-called prefixpolicies in a way that sends traffic over IPv4 wherever possible) only IPv4 is supposed to be used.

- unless something bad happens (which is the core discussion point of this post) there’s not much risk anyway that Windows-internal communication (e.g. authentication with a DC) happens over IPv6 with LLAs as that type of communication is DNS-based (and LLAs don’t end up in DNS, by means of dynamic updates or the like).

To use a fairytale-oriented analogy I’ve used in the past: as long as no prince in the form of a router advertisement shows up, the sleeping beauty in the form of a system’s IPv6 stack will remain (mostly) dormant.

So far so good in theory, there’s only one main problem: what if, for whatever reason, either a puzzled prince (a mis-configured network device or one that simply shouldn’t be there, like a device a user brought from home. these things happen 😉 ) or even worse a malicious one (do those even exist in the common corpus of fairytales?) shows up?

Read: to what degree can operations be disturbed or can specific attacks be carried out once certain IPv6 packets appear in the environment?

Let’s focus on two main vectors here:

- a (misconfigured/malicious) entity acts as IPv6 router (hence: it sends RAs).

- a (misconfigured/malicious) entity acts as DHCPv6 server (hence: it sends DHCPv6 Advertise or Reply messages).

Rogue RAs

In the past we’ve considered those as one of the most significant risks in IPv6 networks (see for example slide #23 from my #TR16 IPv6 Security Summit talk on “Developing an Enterprise IPv6 Security Strategy“), but the evaluation might be different in our specific case here. The tricky part is that IPv6-enabled (but “dormant”) systems will come to a new level of (IPv6) life once they receive such a packet. Usually (barring very specific configuration steps, see below, or non-default properties of the RAs themselves) they will create two global addresses incl. a temporary one and they will add the source address of the packet as IPv6 default gateway. *If* IPv6 is then used for outbound connections (and which of those) might depend on various factors. Suffice to say that simply assuming: “IPv6 will be preferred over IPv4” is usually not correct. The intricacies how clients/client-side applications decide between IPv4 and IPv6 in a dual-stack setting commonly depend on a number of parameters worth a dedicated post on this (=> not covered in this one). On the other hand it’s probably a safe assumption that *some* traffic will happen over v6 then.

Rogue DHCPv6 Packets

Given the default gateway can’t be spoofed by means of DHCPv6 packets probably the main risk here would be the injection of false DNS resolvers which can then be abused for subsequent attacks. A prominent example is the attack which Dirk-jan Mollema laid out in his excellent post on “mitm6 – compromising IPv4 networks via IPv6” (btw, he’ll give a cool talk on Azure AD at #TR19).

Now – long-time readers of this blog know that I really love long introductions 😉 – let’s finally tackle the OPs question: how would one mitigate the associated risks?

From a high-level perspective we have to differentiate between:

- controls on the network/infrastructure level and

- controls on the host level.

Among others I had this dichotomy in mind when I separated the “Protecting Hosts in IPv6 Networks” talk from the already cited Enterprise Strategy talk which had a focus on infrastructure level controls. The former also contains links to the “Hardcore IPv6 Hardening Guides” Antonios Atlasis authored many years ago.

Network Level Mitigations

There’s two well-known approaches against the above risks:

- RA Guard

- DHCPv6 Guard.

Regarding their use there are slight differences between wired (ethernet) and wireless networks which is why I further differentiate between those two in the following.

Wired Ethernet

Usually those two settings have to be enabled (by default they’re not) on switches. There’s different ways to do this on different platforms, but in general those are well-known parameters/settings (hence I won’t cover this in detail. as a starting point you may look at the slides 42–27 from the Enterprise IPv6 Security talk cited above).

With the right tools (Chiron comes to mind) and a bit of effort pretty much all implementations (on Cisco devices; we didn’t test others) can be evaded as discussed in Antonios’ “DHCPv6 Guard: Do It Like RA Guard Evasion” post or in the ERNW Whitepaper “RA Guard Evasion Revisited“.

Still, we consider those two as basic protection measures which should be enabled on all “untrusted”/ client-facing switch ports (and this being the main point in this post:) even in a seemingly v4-only network. Consider this basic network hygiene in 2019!

WiFi

At least in wireless networks based on Cisco equipment both controls are enabled by default and you might not even be able to disable them (see Christopher Werny’s “Case Study: Building a Secure and Reliable IPv6 Guest WiFi Network” talk from the #TR16 IPv6 Security Summit, slides #50ff.). We’ve never tested the resiliency of these implementations when confronted with extension headers, fragmentation and the like but we have this on our list of upcoming lab activities. Nonetheless usually those should be “good enough” to counter most negative scenarios.

Mitigations on the Host Level

At first I’d like to note that – in general – I think that those problems should be solved on the network level. I know that things like different departments being responsible, not having control over one domain or the other, not trusting the department (or their outsourcing partner) in charge of doing their job properly can all lead to a different stance. Still #justsaying 😉

In case one chooses the path of addressing those on the host level (let’s keep in mind, again, that this was the original question) the following come to mind:

- Disabling the processing of RAs on the host level. While this can technically be done (for details see the Protecting Hosts talk mentioned above), I’m a strong opponent of this approach for various reasons (it might be difficult to maintain this setting over the full lifecycle of a system, it constitutes a “deviation from default”, it’s against IPv6 core architecture, there’s a special place in operational hell for people doing such stuff 😉 etc.).

- Get rid of DHCPv6 on the host level. That is an interesting one. Many people incl. myself have lamented about that (as I think) rather strange approach of Microsoft OSs sending out DHCPv6 Solicit messages without being encouraged to do so by means of the respective flag in RAs. Point is: how to get rid of it? Usually disabling the DHCP client service is not an option (for reasons laid out in this post). What one can do instead is disabling the service only for the IPv6 address family by means of the following PowerShell command:

Set-NetIPInterface Ethernet -AddressFamily IPv6 -dhcp disabled

I don’t think this is part of any default GPOs but it shouldn’t be too difficult to integrate this into a custom .admx file either.

- Local packet filtering (e.g. with the Windows Firewall). I’ve covered some related stuff here (focused on systems in virtualized datacenters) but from a management perspective most probably this is not a viable approach for client systems (let alone “unmanaged” ones which often constitute the majority in university networks).

Before I conclude it should further be noted that the deactivation of tunnel technologies which Manel mentioned in his tweet shouldn’t be needed as a dedicated step for Windows 10 anymore (as this MS TechNet article lays out).

So finally getting back to the initial question here’s my response – from an overall security benefit vs. operational feasibility perspective these are the things you should do when operating Win 10 clients in a v4-only environment:

- remove the IPv6 address family from the DHCP client service to get rid of unsolicited DHCPv6 engagements.

- take care that the underlying network infrastructure has RA Guard and DHCPv6 Guard enabled for all client-facing “ports”.

- touching the Windows Firewall shouldn’t be necessary.

- put IPv6 on your roadmap ;-).

As always I’m happy to receive any type of feedback. If you want to discuss this stuff with us in person, the Troopers NGI IPv6 track on March 19th could be a good opportunity (while the main conference is sold out you can still register for NGI which has a pretty interesting agenda as well).

Everybody have a great week,

Enno