Hardening guides for different systems that can be managed by Puppet are easy to find, but not the guides for hardening Puppet itself.

The enterprise software configuration management (SCM) tool Puppet is valued by many SysAdmins and DevOps, e.g. at Google, for scalable, continuous and secure deployment of application server configuration files across large heterogeneous system landscapes and increasingly also as “end-to-end” compliance solution.

Disclaimer:

This blog post does not present anything new about Puppet security, but aims to raise security awareness and summarize useful attack and audit techniques for an internal black and whitebox infrastructure assessment of a Puppet Enterprise landscape.

Most information in this post were collected during and based-on a time-limited graybox Puppet landscape assessment (Puppet Enterprise version 6.4.0, on RHEL7).

Hence, there is no claim for completeness and the post shall not be considered as a fully fledged Puppet hardening guide.

Table of Contents

Background

Similar to its newer competitor Chef, Puppet is based on an open-source core written in the cross-platform language Ruby, but with an abstruse domain specific language (DSL) for configuration specification and automation in a declarative style.

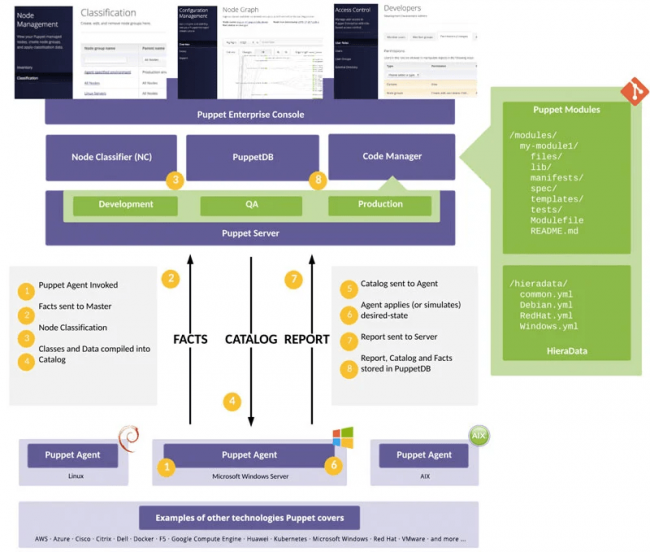

Puppet uses a (multi) master-agent architecture where the Puppet agent is deployed on the end system, e.g. a web server, and retrieves application configuration file updates from the central Puppet master server or server cluster via mTLS secured HTTP pulls (see Figure 1).

By default, the Puppet master exposes multiple web services on HTTPS/8140 (Nginx) and often also a Foreman Smart Proxy service on HTTPS/8443 to dispatch requests into other internal or external services, such as the Puppet Certificate Authority (CA) service.

If a Puppet agent on a new application server wants to join the Puppet landscape, the agent requests a TLS client certificate signing (CSR) from the Puppet CA service via the following API endpoint:

GET https://PUPPETCA-SERVER:8140/puppet-ca/v1/certificate_request/:nodename?environment=:environment

After the signing request is manually granted by an administrator, the Puppet master trusts the agent’s client certificate and allows him to pull agent-node dedicated configuration catalogs via another API endpoint on HTTPS/8140.

Configuration catalogs are grouped by target environment (development, qs and production) and into modules with a manifest file and the application specific config files, as shown in the right directory structure in Figure 1.

Once the agent updated the local application server configuration files according to the pulled configuration catalog, the agent reports the results back to the master server.

Administrators can later analyze the success or failure of configuration changes, e.g. within the administrative web interface of the Puppet master.

The attentive reader might notice that the TLS client certificate of the agent is the only mean for authentication of configuration change transactions and that the manual granting of the initial client certificate signing request might be cumbersome, especially in the event of malicious rogue agents. More about this later.

Even though a compromise of the Puppet master server results in a full compromise of the whole Puppet landscape (see later), in contrast to other SCM solutions, Puppet agents increase the end system’s attack surface only minimal and the architecture does not enable an attacker who compromised one agent system to directly compromise also other agent systems.

However, those security benefits come with a steep learning curve of the Puppet DSL with in-transparent and inconsistent setting options across multiple configuration files, which frequently results in misconfigurations of the Puppet landscape and its TLS certificate management.

Puppet Documentation and Configuration Files (Click to Expand)

The documentation for the latest Puppet Enterprise and Open-Source version can be found at:

- https://puppet.com/docs/puppet/latest/puppet_index.html

- https://puppet.com/docs/puppetserver/latest/index.html

IMPORTANT: Select your target Puppet version when studying the documentation (version select box), because the location of configuration files, semantics of settings and their default values may differ greatly between the different Puppet versions.

Here are a few of the most important configuration directories of a Puppet Enterprise master and agent deployment, which reside by default under /etc/puppetlabs/:

- Puppet master configuration directory:

/puppetserver/(Contains the masterpuppetserver.confandwebserver.conffiles) - Puppet master configuration catalog directory:

/puppetserver/code/(Contains the environment specific app server config files that are to be deployed on the agent nodes) - Puppet agent configuration directory:

/etc/puppetlabs/puppet/(Contains theconfdirincluding thepuppet.confandauth.conffiles, as well as the SSL directory, which are most crucial on agent nodes, but also the master server)

IMPORTANT: The Puppet master server itself has also a Puppet agent installed with agent configs under /etc/puppetlabs/puppet/ that are then applicable for the Puppet server and its services. This means, the sensitive TLS certificates and keys reside on both the Puppet master server and agent nodes under the same path /etc/puppetlabs/puppet/ssl/certs and services that are running on the master (e.g. PuppetDB, pe-orchestration-services and pe-console-services) are using the master’s agent certificate to authenticate.

Additionally, a secret key file under /etc/puppetlabs/orchestration-services/conf.d/secrets/keys.json is generated during installation that is used to encrypt and decrypt sensitive data stored in the inventory service.

Pre-Consultancy Questions

Before diving into the techniques to check the Puppet landscape security, make sure to ask your customer the following questions in the assessment kickoff:

- Which version of Puppet do you use (Open source or enterprise, 4.x, 5.x or 6.x)?

- Do you have the CA service on a different system or do you use the inbuilt CA service of the Puppet master server?

- Do you have the Puppet database service on a different system and or on the Puppet master server?

- Do you use an internal GIT repository for Puppet configuration catalog versioning and if yes, who has commit access?

- Do you actively use sub-services of/for Puppet, such as Bolt, Capistrano, Hiera, R10k, Fabric or Razor, and for what purpose?

- Which configuration catalog “environment” is provided by the Puppet master server and in scope to be used for the agent nodes (usually: “production”)?

Blackbox Assessment Techniques

So you found a Puppet master server listening on HTTPS/8140 during a blackbox internal infrastructure assessment?

Below are a few common security issues that result from Puppet master server misconfigurations and can be detected from a blackbox perspective.

Please click on the issue headings to expand techniques for the issue detection and abuse.

Sensitive Information Exposure in Puppet Master API Endpoints

- Service status endpoint exposed? (Gives you the Puppet server version and sub-service states, the Nginx version can be likely seen in the HTTP server header of the response also, available by default)

curl -k -X GET https://PUPPET-SERVER:8140/status/v1/services {"puppet-profiler":{"service_version":"6.3.0","service_status_version":1,[...]],"active_alerts":[]}} # This is not really interesting..should show enabled foreman-proxy features curl -k -X GET https://PUPPET-SERVER:8443/features - Is the Jolokia metrics endpoint exposed? (Detailed debugging information from a JMX interface/proxy, available by default.)

curl -k -X GET https://PUPPET-SERVER:8140/metrics/v2/version {"request":{"type":"version"},"value":{"agent":"1.6.0",[...]},"timestamp":1593690953,"status":200} curl -k -X GET https://PUPPET-SERVER:8140/metrics/v2/list curl -k -X GET https://PUPPET-SERVER:8140/metrics/v2/search/* curl -k -X GET https://PUPPET-SERVER:8140/metrics/v2/read/java.util.logging:type=Logging/LoggerNames # Definitely check that write and exec operations are not allowed! curl -k -X GET https://PUPPET-SERVER:8140/metrics/v2/write/... curl -k -X GET https://PUPPET-SERVER:8140/metrics/v2/exec/... - Is the Puppet Admin API Accessible? (gets interesting if you compromised an agent system and found TLS client certificates and keys, e.g. from backup directories with weak file permissions

curl -i --cert --key --cacert -X DELETE https://PUPPET-SERVER:8140/puppet-admin-api/v1/environment-cache curl -i --cert --key --cacert -X DELETE https://PUPPET-SERVER:8140/puppet-admin-api/v1/jruby-pool curl -si --cert --key --cacert -X GET https://PUPPET-SERVER:8140/puppet-admin-api/v1/jruby-pool/thread-dump

Naive/Basic-Auto Signing Enabled

If Naive or Basic Auto Signing is enabled on the Puppet master or more specifically the Puppet CA service (instead of the more secure policy-based signing), rogue Puppet agents could steal application server configuration files by requesting a TSL client certificate on behalf of other agent-nodes to impersonate those agent-nodes.

This dangerous setting might be enabled during the initial setup of the landscape and forgotten to be disabled during a GoLive or it might be enabled due to faulty default value assumptions.

Naive Signing can be detected and abused with the the Nmap script puppet-naivesigning.nse [1]:

nmap -sSVC --privileged -vvv --reason -p 8140 --script puppet-naivesigning --script-args puppet-naivesigning.csr=/path/to/csr.pem,puppet-naivesigning.env=production,puppet-naivesigning.node=DomainnameOfAppServerControlledByPuppet PUPPET-SERVER

puppet-naivesigning.env(The environment that is provided to the endpoints; Default: “production”)puppet-naivesigning.csr(The file containing the Certificate Signing Request to replace the default one; Default: nil)puppet-naivesigning.node(The FQDN of the agent-node in the CSR that you want to impersonate; Default: “agentzero.localdomain”)

However, as this misconfiguration poses a great risk (think credentials in app config files) and basic auto signing is more difficult to detect with this script, you better ask your customer’s technical point of contact to provide you the Puppet master config files puppet.conf and autosign.conf.

If the puppet.conf contains the following line in the [master] section:

autosign = true

This means, policy-based, basic or even naive signing might be enabled (which are all worrysome). If the autosign.conf DNS/hostname whitelist is then not empty, all agent-nodes that match the entries according their regex rules could be impersonated (think DNS spoofing) with the therefore enabled basic auto-signing. If the file does not contain a whitelist, but instead is an executable, it might be the policy executable used for policy-based auto-signing. In this case, you should ask the customer to give details about the reason, rules therein and deployment logic of the auto-signing policy to explore potential logic flaws. Also you could try to reverse engineer the policy executable.

External SSL Termination Enabled

If external SSL terminal is enabled on the Puppet master, the master accepts agent’s client certificate when specified in the X-Client-Cert HTTP request header instead of the TLS handshake or potentially even skips the client-certificate validation all together, as it assumed that this happened on a load-balancer before. This might allow a TLS client certificate/authentication bypass for scenarios with a misconfigured load-balancer between Puppet agents and master.

First check, whether the Puppet server API can also be reached via unencrypted HTTP (then everything is broken):

curl -X GET http://PUPPET-SERVER:8140/puppet/v3/node/1

Second, check whether the Puppet master reacts differently on incoming requests with the HTTP request headers X-Client-Verify:True, X-Client-DN:* and X-Client-Cert: nil:

curl -k -X GET https://PUPPET-SERVER:8140/puppet/v3/node/1 # If above command returns something like "Forbidden request", then: curl -k -H "X-Client-Verify:True" -H "X-Client-DN:*" -H "X-Client-Cert: nil" -X GET http://PUPPET-SERVER:8140/puppet/v3/node/1 # If above command returns something like "No certs found in PEM read from x-client-cert", then is external SSL termination enabled.

If you have privileged access to a (compromised) agent node, you can steal the node’s TLS client certificate from /etc/puppetlabs/puppet/ssl/certs and test whether the last curl command above allows you to retrieve information about and for this node. Therein, you can search for secrets and credentials in the configuration catalog files.

Otherwise, you want to startup your favorite web proxy tool and play around with the X-Client-Verify header for the last curl command above, as well as fuzz the other headers above. If you are lucky, you might find an authentication bypass, input validation or misconfiguration vulnerability for acceptable client certificate formats and DNS names.

Find more information about expected values for those HTTP request headers under:

You Managed to Compromise the Puppet Master?

You gained access to the Puppet master?

Once you escalated your privileges to root (or just the Puppet service user), you can now gain mass remote code/command execution on all agent-nodes in the whole Puppet landscape (all application servers managed with Puppet) as demonstrated at [2].

Here are the high level steps to follow for mass-pwnage:

- Check that you have write access on

/etc/puppetlabs/code/environment/production/modules/directory of the Puppet master (or any the files directory and manifest file any existing module) - Generate a Meterpreter/ReverseTCP payload, e.g. using

pwnpet.sh[3] - Put in

/etc/puppetlabs/code/environment/production/modules/on the Puppet master the following payload files:attacker-module-name/files/meterpreter-payloadattacker-module-name/manifest/init.pp

- Start a reverse_tcp handler on your attacker machine, which you specified in the generated payload above

- Include

attacker-module-namein/etc/puppetlabs/code/environment/production/manifests/site.pp - Wait for a sudden flood of incoming reverse shells on the agent-nodes.

A similar attack could be possible by modifying the modules in the default module directories, but then you already need root privileges on the Puppet master to do this and then the approach above is still easier to pull off.

IMPORTANT: The configuration catalog files, which the Puppet master server distributes to the agents might be also versioned in internal GIT repositories of the customer.

If you are able to commit into those repositories, and your maliciously committed changes are rippling through to the Puppet master, it will also enable a mass Puppet landscape compromise.

Whitebox Assessment/Audit

For a whitebox assessment or configuration audit of the Puppet landscape, make sure you collected all important answers to the pre-consultancy questions mentioned above.

First, locate the Puppet master’s main configuration file /etc/puppetlabs/puppetserver/puppetserver.conf and take note of the specified file paths for all other security relevant configuration files (master-conf-dir).

Then you can proceed with the following specific audit checks:

- Check in

/master-conf-dir/puppetserver.conffile section “puppet-admin“, which client TLS certificates are allowed to use the Puppet master server’s admin APIs. If you have access to one of the agent-nodes where the allowed client certificates are deployed, make sure to check whether they have secure file permissions set. If you can access the allowed TLS client certificate, you can also then verify that they are allowed to be used on the Admin API with one of the following API calls, executed from the Puppet master server (localhost):curl -si --cert --key --cacert -X GET https://localhost:8140/puppet-admin-api/v1/jruby-pool/thread-dump curl -i --cert --key --cacert -X DELETE https://localhost:8140/puppet-admin-api/v1/environment-cache curl -i --cert --key --cacert -X DELETE https://localhost:8140/puppet-admin-api/v1/jruby-pool

- Check for weak file permissions of Puppet configuration catalog files and directories (they MUST NOT be writable by any user other than the puppet service user or the root user):

ls -lar /etc/puppetlabs/code/environment/ # Check in the output, especially the sub directories /production/modules/*)

- Check the same for the API endpoints used by the Puppet agents by searching for overly permissive wildcards and missing auth requirements in the

/etc/puppetlabs/puppetserver/auth.conffile (API whitelist in HOCON format) - Check whether the Puppet CA service (inbuilt CA service or external Puppet CA server) uses auto- or even naive-signing for certificate signing requests (CSR) from new agents:

grep "autosign" /etc/puppetlabs/puppet/puppet.conf # MUST NOT return "autosign = true", otherwise naive or basic-auto signing is enabled

If the

autosign = truedirective is present, make sure to check the basic-auto signing whitelist of allowed agent DNS/host names or a specified signing policy. - Check if external SSL termination is enabled by searching for the

allow-header-cert-infodirective in the/etc/puppetlabs/puppetserver/conf.d/auth.confconfiguration file. If this directive exists and is set totrue, external SSL termination is enabled. However, make sure to check also the documentation of your target Puppet version in regard to this setting to determine whether external SSL termination might be prevented by another setting. Also you should double check with your customer whether external SSL termination is a requirement in the given environment. More information can be found at:- https://puppet.com/docs/puppetserver/latest/external_ssl_termination.html

- https://puppet.com/docs/puppetserver/latest/puppet_conf_setting_diffs.html#ssl_client_header

- https://puppet.com/docs/puppetserver/latest/puppet_conf_setting_diffs.html#ssl_client_verify_header

- https://puppet.com/docs/puppet/latest/configuration.html#ssl_client_header

grep "metrics-webservice" /etc/puppetlabs/puppetserver/metrics.conf # SHOULD NOT return "enabled: true" # Alternatively or perhaps as additional PoC use the following curl command to confirm the issue: curl -k -X GET https://PUPPET-SERVER:8140/metrics/v2 # This should shows detailed version information, when this Jolokia metrics service is enabled.

/etc/puppetlabs/puppet/puppet.conf and webserver.conf configuration file.

If at least one of the ssl-settings in the webserver.conf is set but ssl-crl-path is not set, the Puppet master server will not use a CRL to validate clients via SSL. More information can be found here, note however that this setting is a bit difficult to understand, see also here and further links on this website)

strict_hostname_checking is disabled (CVE-2020-7942, strict_hostname_checking config)