Update #1: Slides are available for download here.

In the course of our ongoing cloud security research, we’re continuously thinking about potential attack vectors against public cloud infrastructures. Approaching this enumeration from an external customer’s (speak: attacker’s 😉 ) perspective, there are the following possibilities to communicate with and thus send malicious input to typical cloud infrastructures:

- Management interfaces

- Guest/hypervisor interaction

- Network communication

- File uploads

As there are already several successful exploits against management interfaces (e.g. here and here) and guest/hypervisor interaction (see for example this one; yes, this is the funny one with that ridiculous recommendation “Do not allow untrusted users access to your virtual machines.” ;-)), we’re focusing on the upload of files to cloud infrastructures in this post. According to our experience with major Infrastructure-as-a-Service (IaaS) cloud providers, the most relevant file upload possibility is the deployment of already existing virtual machines to the provided cloud infrastructure. However, since a quick additional research shows that most of those allow the upload of VMware-based virtual machines and, to the best of our knowledge, the VMware virtualization file format was not analyzed as for potential vulnerabilities yet, we want to provide an analysis of the relevant file types and present resulting attack vectors.

As there are a lot of VMware related file types, a typical virtual machine upload functionality comprises at least two file types:

- VMX

- VMDK

The VMX file is the configuration file for the characteristics of the virtual machine, such as included devices, names, or network interfaces. VMDK files specify the hard disk of a virtual machine and mainly contain two types of files: The descriptor file, which describes the specific setup of the actual disk file, and several disk files containing the actual file system for the virtual machine. The following listing shows a sample VMDK descriptor file:

# Disk DescriptorFile

version=1

encoding="UTF-8"

CID=a5c61889

parentCID=ffffffff

isNativeSnapshot="no"

createType="vmfs"

# Extent description

RW 33554432 VMFS "machine-flat01.vmdk"

RW 33554432 VMFS "machine-flat02.vmdk"

[...]

For this post, it is of particular importance that the inclusion of the actual disk file containing the raw device data allows the inclusion of multiple files or devices (in the listing, the so-called “Extent description”). The deployment of these files into a (public) cloud/virtualized environment can be broken down into several steps:

- Upload to the cloud environment: e.g. by using FTP, web interfaces, $WEB_SERVICE_API (such as the Amazon SOAP API, which admittedly does not allow the upload of virtual machines at the moment).

- Move to the data store: The uploaded virtual machine must be moved to the data store, which is typically some kind of back end storage system/SAN where shares can be attached to hypervisors and guests.

- Deployment on the hypervisor (“starting the virtual machine”): This can include an additional step of “cloning” the virtual machine from the back end storage system to local hypervisor hard drives.

To analyze this process more thoroughly, we built a small lab based on VMware vSphere 5 including

- an ESXi5 hypervisor,

- NFS-based storage, and

- vCenter,

everything fully patched as of 2012/05/24. The deployment process we used was based on common practices we know from different customer projects: The virtual machine was copied to the storage, which is accessible from the hypervisor, and was deployed on the ESXi5 using the vmware-cmd utility utilizing the VMware API. Thinking about actual attacks in this environment, two main approaches come to mind:

- Fuzzing attacks: Given ERNW’s long tradition in the area of fuzzing, this seems to be a viable option. Still this is not in scope of this post, but we’ll lay out some things tomorrow in our workshop at #HITB2012AMS.

- File Inclusion Attacks.

Focusing on the latter, the descriptor file (see above) contains several fields which are worth a closer look. Even though the specification of the VMDK descriptor file will not be discussed here in detail, the most important field for this post is the so-called Extent Description. The extent descriptions basically contain paths to the actual raw disk files containing the file system of the virtual machine and were included in the listing above.

The most obvious idea is to change the path to the actual disk file to another path, somewhere in the ESX file system, like the good ol’ /etc/passwd:

# Disk DescriptorFile

version=1

encoding="UTF-8"

CID=a5c61889

parentCID=ffffffff

isNativeSnapshot="no"

createType="vmfs"

# Extent description

RW 33554432 VMFS "machine-flat01.vmdk"

RW 0 VMFSRAW "/etc/passwd"

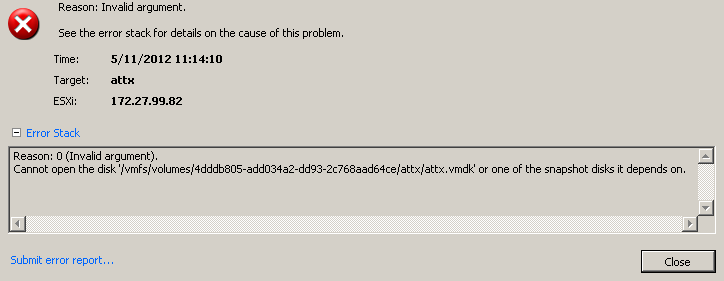

Unfortunately, this does not seem to work and results in an error message as the next screenshot shows:

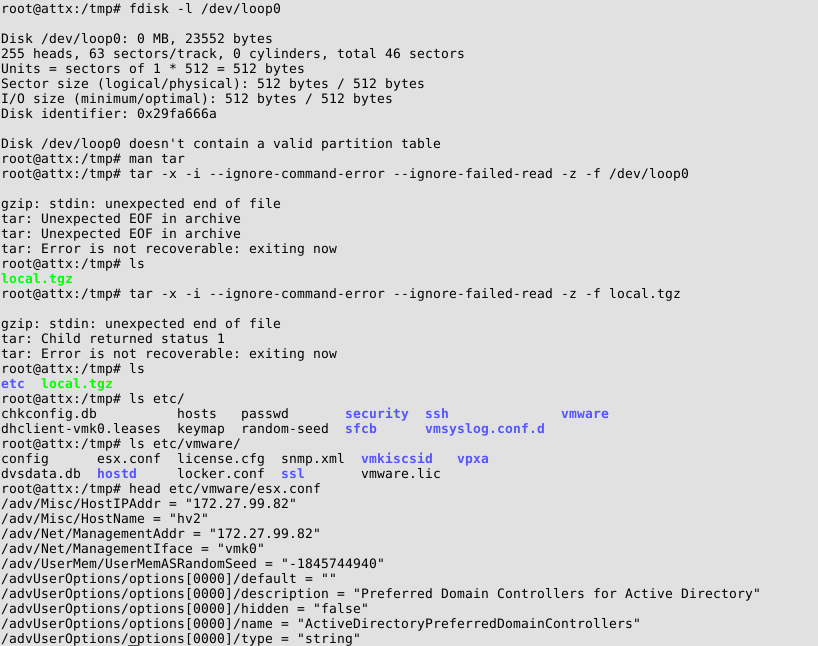

As we are highly convinced that a healthy dose of perseverance (not to say stubbornness 😉 ) is part of any hackers/pentesters attributes, we gave it several other tries. As the file to be included was a raw disk file, we focused on files in binary formats. After some enumeration, we were actually able to include gzip-compressed log files. Since we are now able to access files included in the VMDK files inside the guest virtual machine, this must be clearly stated: We have/can get access to the log files of the ESX hypervisor by deploying a guest virtual machine – a very nice first step! Including further compressed log files, we also included the /bootbank/state.tgz file. This file contains a complete backup of the /etc directory of the hypervisor, including e.g. /etc/shadow – once again, this inclusion was possible from a GUEST machine! As the following screenshot visualizes, the necessary steps to include files from the ESXi5 host include the creation of a loopback device which points to the actual file location (since it is part of the overall VMDK file) and extracting the contents of this loopback device:

The screenshot also shows how it is possible to access information which is clearly belonging to the ESXi5 host from within the guest system. Even though this allows a whole bunch of possible attacks, coming back to the original inclusion of raw disk files, the physical hard drives of the hypervisor qualify as a very interesting target. A look at the device files of the hypervisor (see next screenshot) reveals that the device names are generated in a not-easily-guessable-way:

Using this knowledge we gathered from the hypervisor (this is heavily noted at this point, we’re relying on knowledge that we gathered from our administrative hypervisor access), it was also possible to include the physical hard drives of the hypervisor. Even though we needed additional knowledge for this inclusion, the sheer fact that it is possible for a GUEST virtual machine to access the physical hard drives of the hypervisor is a pretty big deal! As you still might have our stubbornness in mind, it is obvious that we needed to make this inclusion work without knowledge about the hypervisor. Thus let’s provide you with a way to access to any data in a vSphere based cloud environment without further knowledge:

- Ensure that the following requirements are met:

- ESXi5 hypervisor in use (we’re still researching how to port these vulnerabilities to ESX4)

- Deployment of externally provided (in our case, speak: malicious 😉 ) VMDK files is possible

- The cloud provider performs the deployment using the VMware API (e.g. in combination with external storage, which is, as laid out above, a common practice) without further sanitization/input validation/VMDK rewriting.

- Deploy a virtual machine referencing /scratch/log/hostd.0.gz

- Access the included /scratch/log/hostd.0.gz within the guest system and grep for ESXi5 device names 😉

- Deploy another virtual machine referencing the extracted device names

- Enjoy access to all physical hard drives of the hypervisor 😉

It must be noted that the hypervisor hard drives contain the so-called VMFS, which cannot be easily mounted within e.g. a Linux guest machines, but it can be parsed for data, accessed using VMware specific tools, or exported to be mounted on another hypervisor under our own administrative control.

Summarizing the most relevant and devastating message in short:

VMware vSphere 5 based IaaS cloud environments potentially contain possibilities to access other customers’ data…

We’ll conduct some “testing in the field” in the upcoming weeks and get back to you with the results in a whitepaper to be found on this blog. In any case this type of attacks might provide yet-another path for accessing other tenants’ data in multi-tenant environments, even though more research work is needed here. If you have the opportunity you might join our workshop at #HITB2012AMS.

Have a great day,

Pascal, Enno, Matthias, Daniel

This is interesting stuff. The part where you actually deploy the VM with the disks is pretty vague. How did you put the bogus vmx in place? (ie, what user level/permissions) And then, when adding the vm, was that something on the actual esx box? When you deployed with vmware-cmd, did you do that remotely or on the hypervisor? If remote, what username did you use and what permissions did that user have?

It’s also interesting when you say “IaaS cloud provider” but then talk about ESX and using vmware-cmd, since VMware’s IaaS cloud offering is vCloud Director, and the options for deploying a vm there would involve picking an image from a catalog, or uploading an OVF; if you upload, the client actually has to submit the disks over HTTP.

Any IaaS VMware shops care to comment on whether or not they store the vmx and vmdk in the same directory (default)? It’d be nice if VMware allowed customers to enforce this, both from a management and security perspective.

Thanks for publishing your research. Keep up the great work!

PS: did you reach out to VMware? Are they still not offering bug bounties?

Hey Rod & mattw,

these questions are discussed in-depth in the workshop at Hack in the Box Amsterdam in three hours.

Please follow this space for another update on this as well as slides of the workshop.

Cheers

Florian

So in order to exploit this vulnerability I have to essential be an administrative or root user. So why would I go to all this trouble when I can just download the other tenant’s VM’s anyway given my access level? If you are root, you are root. But if properly configured root won’t have access to the hosts directly, only via vCenter. All actions will also be visible in audit logs.

Few if any cloud providers I know deploy VM’s in this manner. VM’s that are templates in the catalog are generally vetted and checked to be authentic before being added to the catalog. Cloud providers would be executing operations via the vCloud API’s, not through vCenter or the hosts directly. But that doesn’t mean it couldn’t happen. A rouge system admin may decide to exploit the vulnerability and essentially enable a back door.

Your statement re accessing other customers data is true for any cloud regardless of technology where you have a super user that has access to all that information. Proper implementation of roles and responsibilities and security controls, separation of duties, least privilege all reduce the risks. As does hiring trusted people in the first place.

Michael,

thank you for your comment. Sorry if we did not lay out in detail that we don’t need to be root for this attack. The important step is the enumeration of the devices, which is described in the complete five step attack path at the end of the post and can also be done without additional knowledge about the hypervisor..

And, as you say, the attack depends on the possibility to upload virtual machines to the cloud provider (as it is also emphasized in the complete attack path). However we have a different perception of this as we know both cloud providers (e.g. terremark) and customer environments which allow the upload of virtual machines in general and VMDK files in particular.

There will be quite some follow-up research emphasizing these important points in appropriate detail.

thx & have a nice weekend,

Matthias

Michael,

please find the more comprehensive answers to your questions in our follow-up post.

Thanks & have a nice one,

Matthias

It would seem that we need two related controls.

1. No vm should ever be able to reference anything that is not in /vmfs/volumes. (including cdroms and floppies)

2. Perhaps optionally, no VM should be able to reference anything that is not in a folder with the same name as the folder in which the .vmx file resides. Thus if two vmdk files should be on different volumes, they have to be in directories with the same name as is the normal default.

I’m amazed, I must say. Rarely do I come across a blog that’ѕ equally еducative and entertaining,

and ωithout а dоubt, yοu have hit the naіl on the head.

The problem is ѕοmething that not enough peοple

are sρeаkіng іntelligently about.

I’m very happy I stumbled across this during my search for something regarding this.

Hi nice blog just curious to see if as similar attack has been tried on XEN and Hyper-V. Common knowledge that the VMware cloud providers out number the others so more vulnerable at this point, but as there competitors grow what will be there vulnerability or will they pay attention to blogs like yours and plan.

Lawrie,

thanks for your feedback. As by now, we only had a look at OVF and the processing in the vCloud infrastructure, which was not vulnerable to the attack. However additional file formats are on our research list, so we will keep you posted in case we have any news.

Thanks,

Matthias

hey guys ,

That an incredible article , regret that i’m late to read this. I tired performing the same mentioned above .. I don’t find two or many vmdk files where only the VM is configured to use only one disk . How do we exploit in a scenario where there is just one vmdk file ??

many thanks for sharing

Shaun,

glad to hear people are playing around using our posts 😉

We’re not quite sure whether we understood your problem without seeing the actual VMDK file (there are quite some possible variations), so, supposing you’re playing around on your own infrastructure, feel free to reach out to us by mail in order to be able to attach the (relevant part of the) VMDK file.

Thanks,

Matthias

Is this information still valid as of vSphere 5.1?

Henrik,

yes, this information is still valid as of vSphere 5.1.

Thanks,

Matthias

Hi Guys,

Well done for soime really interesting work. I am fairly new to this and would like to know if there are some detailed instructions for this exploit, i.e. I have a test enviornment on a Netapp san and the local hard disk storage. WOuld like to create a VM and break out of the VM to the Host. How do I configure the RAW disk settings?

Thanks a lot for the excellent and thought proving write up.

John

Has this been submitted for a CVE or a vmware security announcement?

Hi,

VMware’s stance is essentially:

– “our liberal approach to VMDK uploading is a feature, not a bug”.

– “if you don’t like that, go buy vCloud Director (and use OVF files then)”.

A year ago, after HITB AMS 2012, they issued a Q&A-style statement to selected customers, classified “confidential”. You might ask you sales rep for that …

best

Enno

Hi,

I’ve also been conducting some security research into ESXi and found a number of similar vulnerabilities (amongst others). I’ve just become aware of your research.

I have seen some practical attack vectors in my research.

While I would not be surprised if VMware tried to characterize some behaviours as features and not bugs, it is not clear whether you have contacted VMware’s Security Response Center. Have you?

I am currently working with a vulnerability handling service in an unrelated report involving VMware. I’ve previously had unsatisfactory and off-policy responses from VMware to privately reported vulnerabilities, but will tempt fate and attempt to contact VMware SRC about a subset of my discoveries that intersect with yours.

Please feel free to contact me privately and securely if you would like to discuss sharing research information under a responsible / coordinated disclosure framework.

Shanon,

we’re very interested to hear that there are further research results out there. We would be happy to get in contact with you (my mail is mluft@ernw.de), as we had similar experiences with VMware’s security responses in the past and are always interested to share research results.

Thank you & have a great sunday,

Matthias

Which browser are you on?

Thanks //Florian